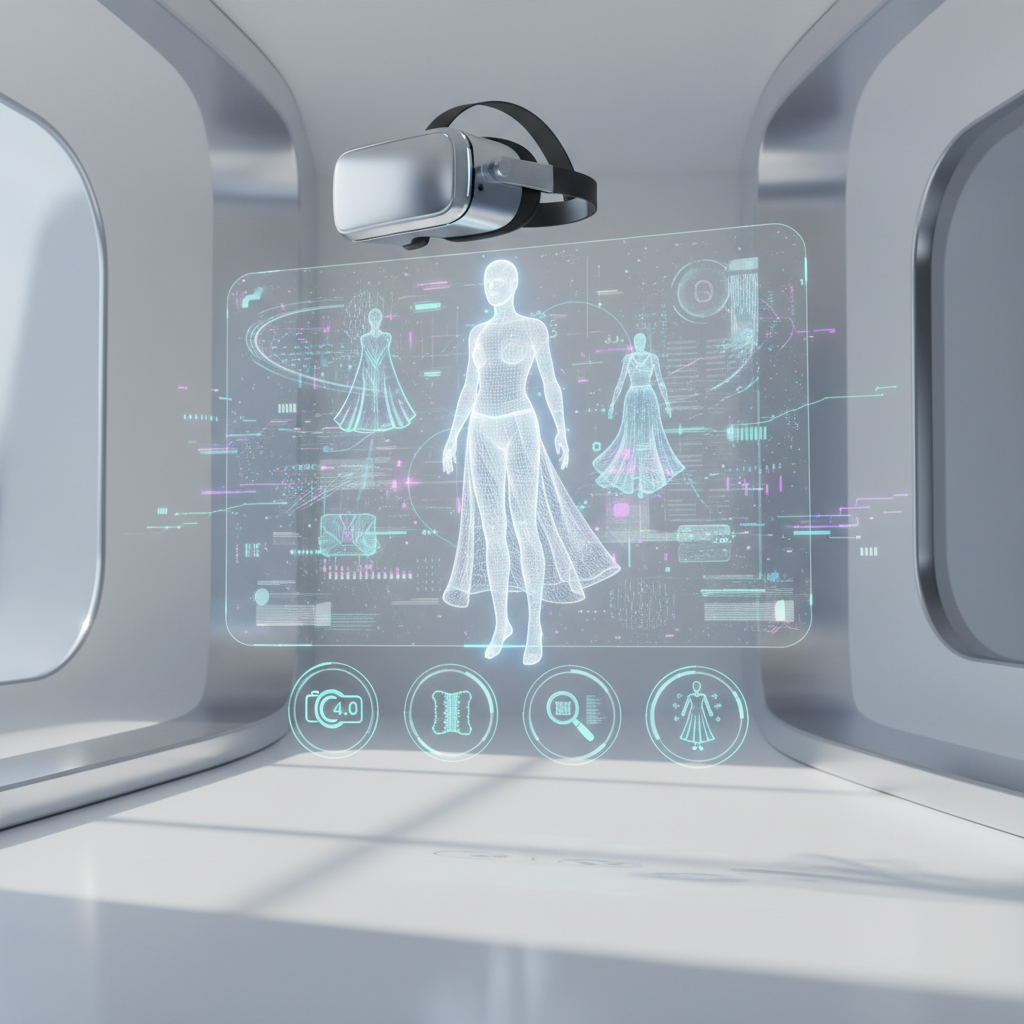

In a significant leap forward for augmented reality (AR) creation, the latest update to a leading AR development platform introduces groundbreaking features set to redefine digital interaction. With the announcement that Snap Releases Lens Studio 4.0 with 3D Body Mesh & More, Upgrades Scan as Fashion Assistant, creators and brands are now equipped with an unprecedented toolkit for immersive experiences. This powerful iteration provides developers with advanced capabilities, from hyper-realistic virtual try-ons to dynamic multiplayer AR environments, pushing the boundaries of what's possible in the XR space. For those looking to explore the cutting edge of mixed reality accessories and experiences, MetanexusXR offers a curated selection of innovative products and insights into the evolving landscape of immersive technology.

Unleashing Creativity with 3D Body Mesh in Lens Studio 4.0

The centerpiece of this monumental update is the introduction of 3D Body Mesh technology. This sophisticated feature empowers developers to perform real-time, full-body 3D segmentation of individuals, allowing for the application of intricate AR effects that seamlessly conform to the human form. Imagine a user trying on a virtual jacket that drapes realistically, or having digital flames appear to dance across their arms – all in real-time and with incredible precision. The 3D Body Mesh goes beyond simple overlays; it understands and adapts to body movements, enabling highly interactive and believable virtual try-on experiences for clothing, accessories, and even fantastical digital attire. Creators can now design AR lenses that simulate various materials, textures, and physics, providing a truly immersive fashion preview without ever touching a physical garment. This capability not only revolutionizes virtual retail but also opens up new avenues for artistic expression and character design within AR.

Technical Prowess: From Segmentation to Simulation

The underlying technology of 3D Body Mesh leverages advanced computer vision and machine learning algorithms to accurately map a user's body in three dimensions. This real-time mapping allows for:

- Precise Body Tracking: Accurate detection and tracking of body joints and contours.

- Dynamic Segmentation: Distinguishing the user's body from the background and applying effects only to the intended areas.

- Realistic Clothing Simulation: Algorithms that mimic how fabric drapes, folds, and moves with the body, ensuring virtual garments look natural.

- Full-Body Effects: Applying complex visual effects like smoke, fire, glitter, or intricate patterns that follow the body's movements and contours.

Scan as Fashion Assistant: The Future of Retail and Personal Style

Another transformative upgrade is the enhanced 'Scan as Fashion Assistant' feature, which elevates the concept of virtual shopping and style guidance. This AI-powered tool allows users to simply scan a real-world outfit they admire – whether on a friend, a mannequin, or a magazine – and receive instant, intelligent recommendations. The assistant can identify key elements of the scanned outfit, suggest similar garments or accessories available for purchase, and even facilitate virtual try-ons using the new 3D Body Mesh technology. This bridges the gap between inspiration and acquisition, making fashion discovery more interactive and personalized than ever before.

Empowering Consumers and Brands Alike

The implications for retail and personal styling are immense. For consumers, it offers an on-demand personal stylist in their pocket, helping them discover new styles and make informed purchasing decisions. For brands and retailers, it provides an innovative channel for product discovery, engagement, and direct sales through AR. Imagine browsing a new collection of XR accessories and gear, then using the Scan feature to find complementary virtual items or even exploring physical counterparts on sites like MetanexusXR's full collection. This feature not only streamlines the shopping experience but also creates a compelling, interactive narrative around fashion and consumer goods. Developers can leverage this to create custom fashion discovery lenses, integrating directly with e-commerce platforms and expanding brand reach in the digital realm.

Beyond Fashion: Connected Lenses and Visual Scripting

While 3D Body Mesh and Scan as Fashion Assistant are headline features, Lens Studio 4.0 delivers a suite of other powerful enhancements that broaden its appeal. Connected Lenses enable multiple users to engage in shared AR experiences simultaneously, fostering social interaction within virtual environments. Friends can now collaboratively build virtual sandcastles on a beach, play AR games together, or share synchronized effects, deepening the sense of presence and connection in AR. This is a game-changer for social AR and multiplayer gaming.

For creators, the updated Visual Scripting interface is a boon. This no-code environment allows developers to create complex AR behaviors and interactions using a simple drag-and-drop, node-based system. This significantly lowers the barrier to entry for aspiring AR creators, enabling them to build sophisticated lenses without extensive coding knowledge. The visual scripting capabilities are further complemented by an expanded API Library, offering deeper integration with external applications and services, including robust support for Camera Kit and Creative Kit. This means developers can integrate Snapchat's powerful AR capabilities into their own apps or share AR content seamlessly across platforms. Exploring the full potential of these tools can unlock new levels of immersion, particularly when paired with high-quality VR accessories that enhance the sensory experience.

Performance and Accessibility

Lens Studio 4.0 also brings significant performance optimizations, ensuring smoother AR experiences across a wider range of devices. New templates, an expanded asset library, and improved debugging tools further streamline the creation process. The platform continues its commitment to making AR development accessible, empowering a global community of creators to innovate and monetize their work. Whether it's developing intricate virtual worlds or simple, engaging filters, the platform provides the tools for unprecedented creativity. For anyone looking to enhance their AR journey or dive deeper into the world of XR, accessories and peripherals from MetanexusXR's VR accessories can provide the necessary edge.

The release of Lens Studio 4.0 marks a pivotal moment in the evolution of augmented reality. By introducing advanced features like 3D Body Mesh for hyper-realistic virtual try-ons and the AI-powered Scan as Fashion Assistant, alongside robust multiplayer capabilities and accessible visual scripting, the platform is not just updating; it's redefining the creative potential of AR. These advancements open up new frontiers for fashion, entertainment, social interaction, and beyond, making sophisticated AR experiences more accessible and immersive than ever. Creators, brands, and consumers alike stand to benefit from these innovations, solidifying AR's role as a transformative technology in our daily lives.

Discover the latest in XR technology and elevate your immersive experiences. Explore new arrivals at MetanexusXR today!

User Reviews:

AR_Designer_X: "Lens Studio 4.0 is a game-changer! The 3D Body Mesh is incredibly precise, allowing for virtual try-ons that look indistinguishable from real life. My fashion clients are blown away!"

FashionTechFan: "The Scan as Fashion Assistant is pure magic. I scanned an outfit from a magazine, and it instantly gave me links to similar items. It's like having a personal stylist, but way cooler."

Engaging Discussion Questions:

- How do you foresee 3D Body Mesh technology impacting the future of online retail and virtual try-ons for industries beyond fashion?

- What ethical considerations might arise with highly realistic virtual try-on technology, especially regarding body image and digital representation?

- Beyond fashion, what innovative applications can you imagine for the 'Scan as Fashion Assistant' feature, leveraging its AI object recognition capabilities?